Tutorial:2D Viseme Textures

More actions

Some avatars, most commonly cartoony or low-poly avatars, have a sprite sheet or separate textures for "2D Visemes" for speech, rather than the more common blendshape visemes. Unlike blendshape visemes, these can't be automatically applied on import, so it requires some setup to get working.

Assumptions

This tutorial will assume the following:

- The avatar or object already has textures for visemes (this is not a tutorial on creating these textures, rather applying them).

- The avatar has already been imported and created.

Tutorial

Viseme Setup

To start, make sure that your avatar contains a VisemeAnalyzer. If viseme blendshapes were noticed when importing and creating the avatar, there will be a VisemeAnalyzer under the Head Proxy slot, and you can proceed to #Driving The Texture.

Otherwise, navigate to the Head Proxy slot and attach a VisemeAnalyzer component and an AvatarVoiceSourceAssigner component. Set the TargetReference of the AvatarVoiceSourceAssigner to the Source field of the VisemeAnalyzer.

Texture Setup

Before moving on, it is best to look at how the avatar viseme textures are set up. There are two likely scenarios for how viseme textures are provided: as an atlased texture, where there exists one texture that houses every individual viseme state, or as separated textures, with one texture per viseme state. It doesn't necessarily matter which type is being used, but the process for implementing each is subtly different.

For organizational purposes, it is recommended to have everything from now on in a new slot under the mesh that will house the viseme textures. Open a new inspector and navigate to the mesh slot most applicable for visemes (usually, there will be a separate head mesh for stuff like this). Create a child slot and give it a descriptive name.

Atlased Texture

Attach an AtlasInfo component and a UVAtlasAnimator component to the newly created slot. In the AtlasInfo component, set GridSize to the width and height of the atlased texture and GridFrames to the amount of individual viseme textures on the atlas. For example, if there are 11 viseme state textures arranged in a 4x3 grid, set GridSize to (4, 3) and GridFrames to 11.

Find (or create and apply) the material that will hold your atlased viseme texture. Drag the TextureScale label into the UVAtlasAnimator's ScaleField field, and the TextureOffset label into the UVAtlasAnimator's OffsetField field. Drag the AtlasInfo component from before into the AtlasInfo field of the UVAtlasAnimator.

Take note of where each texture in the monotexture lies. In brief, the monotexture gets "split" into rectangles with ratios depending on the GridSize. The Frame field chooses one of these squares to output, from 0 to GridFrames - 1. The selection is done from top to bottom and left to right, so frame 0 corresponds to the topleft portion, frame 1 the portion to the right (or below, if there exists only 1 column) of the previous frame, etc.

Separated Textures

Attach an AssetMultiplexer<ITexture2D> component to the newly created slot. Add and set as many textures as there exists for the avatar visemes. Take note of which textures you set for which index.

Find (or create and apply) the material that will be your current viseme texture. Drag the AlbedoTexture label into the Target field of the AssetMultiplexer.

Driving The Texture

For the purposes of the tutorial, two ways of driving the texture will be provided. The more straightforward, intuitive way using ProtoFlux, and the less straightforward, yet (subjectively) more organized way using Components. There is no significant difference in performance between the two methods; it is a matter of organization and what one wishes to do. It is merely to demonstrate the existence of multiple ways to accomplish the same thing.

The ProtoFlux Way

Source each of the viseme fields that you wish to map from the VisemeAnalyzer (at the time of writing, you should have 16 total sources, from Silence to LaughterProbability). Plug all of these sources into a Value Max Multi node. Plug the output of this node into the Match field of a Index Of First Match node.

Additionally, plug all of the sources into the rest of the fields of the IndexOfFirstMatch in the applicable order where indices match the relevant visemes. For example, if the FF viseme is at index/frame 4 of your setup, make sure that the source for that viseme is plugged in to the 4th index of the IndexOfFirstMatch (which should be the 6th input as a whole).

Finally, drive the UVAtlasAnimator Frame or AssetMultiplexer Index and plug the output of the IndexOfFirstMatch into the drive node.

The Component Way

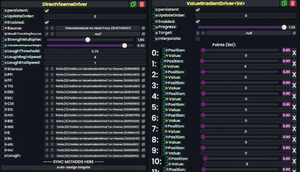

Under the slot you created, add a DirectVisemeDriver component and a ValueGradientDriver<int> component. In the ValueGradientDriver, set the Progress to 1.00 and turn Interpolate off. Add as many points as there exists viseme types in the DirectVisemeDriver (as of the time of writing, this is 16 points). For each point, drag its Position field into the first available viseme driver on the DirectVisemeDriver. For each Value in the ValueGradientDriver, type the relevant frame of the UVAtlasAnimator or index in the AssetMultiplexer of the texture you want to use for its associated viseme.

Finally, drag the Frame label on the UVAtlasAnimator or Index field of the AssetMultiplexer into the Target field on the ValueGradientDriver.

Conclusion

By now, 2D visemes should be completely setup for your avatar. For any unused visemes that don't necessarily map to any texture, you can safely delete its associated point in the ValueGradientDriver (it is highly recommended you do this from highest index to lowest index to prevent getting confused).

For more in-depth details about how certain components or steps work, it is highly recommended to view the individual wiki pages for the related topic.

Integration With Other Mouth Textures

Some avatars may have mouth expressions outside of visemes that one would want to take precedence over the normal viseme textures.

Whether you are using Components or Protoflux, one can create a ValueMultiplex component on the working slot, and drive the Frame of the UVAtlasAnimator or Index of the AssetMultiplexer using it. Then, most likely using ProtoFlux, one can drive the Index of said Multiplex depending on whether you want visemes or other textures to show.